Attenuation in Cable

Getting your network setup right is determined by many different aspects. The main things we need to look for is the type of cable, the quality, where your installing it and keeping good practices in mind. When installing ethernet cables there is a phenomenon that occurs on the cable called attenuation. In this article we will cover what is attenuation and how attenuation can effect your cable?

What Is Attenuation?

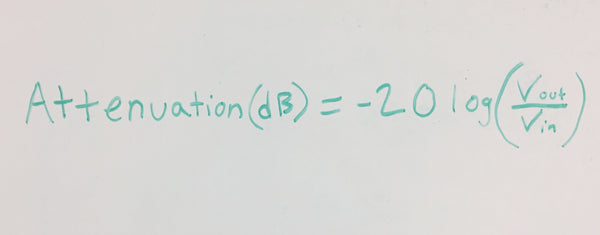

Attenuation in cables relates to the ratio in decibels of the output power (or voltage) to the input power (or voltage) when the load and source impedance are matched to the typical impedance of the cable. If your terminations are properly done and equal then the ratio of the output to input power or voltage is called attenuation. Real life measurements will result in values that are higher than the attenuation depending on the degree of it being mismatched. If you are analyzing it in terms of voltage ratio then attenuation can be determined according to this fun expression:

Where Vin = Input Voltage and Vout = Output voltage

The Presence of Attenuation in Cables

The way Attenuation has an effect on cables is a loss of signal transmission or signal strength. The more attenuation in the signal the worse it is and your communication is limited. Some of the major causes of attenuation in cables is noise on the network.

This can come from other power cables, radio or electric currents and even poorly terminated cables. Attenuation is also commonly more present in cables as they increase in length. So the further the cable is installed the more chance there is that attenuation can affect your cable.

The goal in ethernet cables is to have lower attenuation than higher attenuation. There are multiple ways you can have achieve this so let's get into what can be done.

How To Reduce The Occurrence of Attenuation?

One way to combat this could be to use shielded ethernet cables. Shielded ethernet cables do a great job from protecting your cables from EMI or signal interference. They also help give your cable better ability to perform at higher frequencies. The aspect to take in to consideration when installing cable is the surrounding environment around it. If your cable is exposed to weather conditions and the cable is not properly suited for outdoor conditions it can cause attenuation to increase and start to lose signal strength. One way to tackle this would be to use outdoor rated cable which is built for outdoor conditions with the proper jacket material. Outdoor cables are suitable for conditions such as UV and rain. Outdoor cable also has the ability to be buried in the ground.

We mentioned this previously but one of the biggest reasons for attenuation is cable distance. The recommended channel length for most categories of cable is 100 meters (328 Feet). The 100 meters distance composes of 90 meters of a cable back bone and 10 meters of patch cable to your end device. When we refer to backbone we mean the largest length portion of cable that is ran. This is usually the cable that is ran inside in the walls, plenum areas, risers or wherever your cable needs to go.

This types of cable is usually solid conductor cable. When you start getting in to distances farther than 100 meters is when you run into problems such as signal strength decreasing and the category cable effectiveness reduces. So it is always recommend to follow standards and good practice in regards to cable terminations and cable lengths when running cables in your home or business.

Solid conductor cable is going to give you better performance at longer distances and will have lower attenuation than stranded cable.

Want some additional content on Attenuation? Check out this video for more information on what is attenuation.

Conclusion

Attenuation in cable is something that is just going to happen. So better preparing yourself will give you the best chance to mitigate some of the affect it can have. The amount of attenuation on cable will vary from install to install but following some of these tips will give you better performance in your network.

Happy Cabling !